What is the most offensive possible token?

Every time I post about my research someone asks "wait what is this" and I end up typing the same explanation in twitter DMs at 2 AM, so I finally asked Claude Code to make me a website. I'm not a web designer. I don't have TIME to be a web designer. I'm trying to find the vector that turns a word into a weapon. So— here. This is that. Scroll down and I'll explain.

The Problem

Okay so you're here, which means you either followed a link or you searched for something that led you to this page, and either way you're probably wondering what this is about, and I'm going to tell you, but I need you to understand something about embedding spaces first.

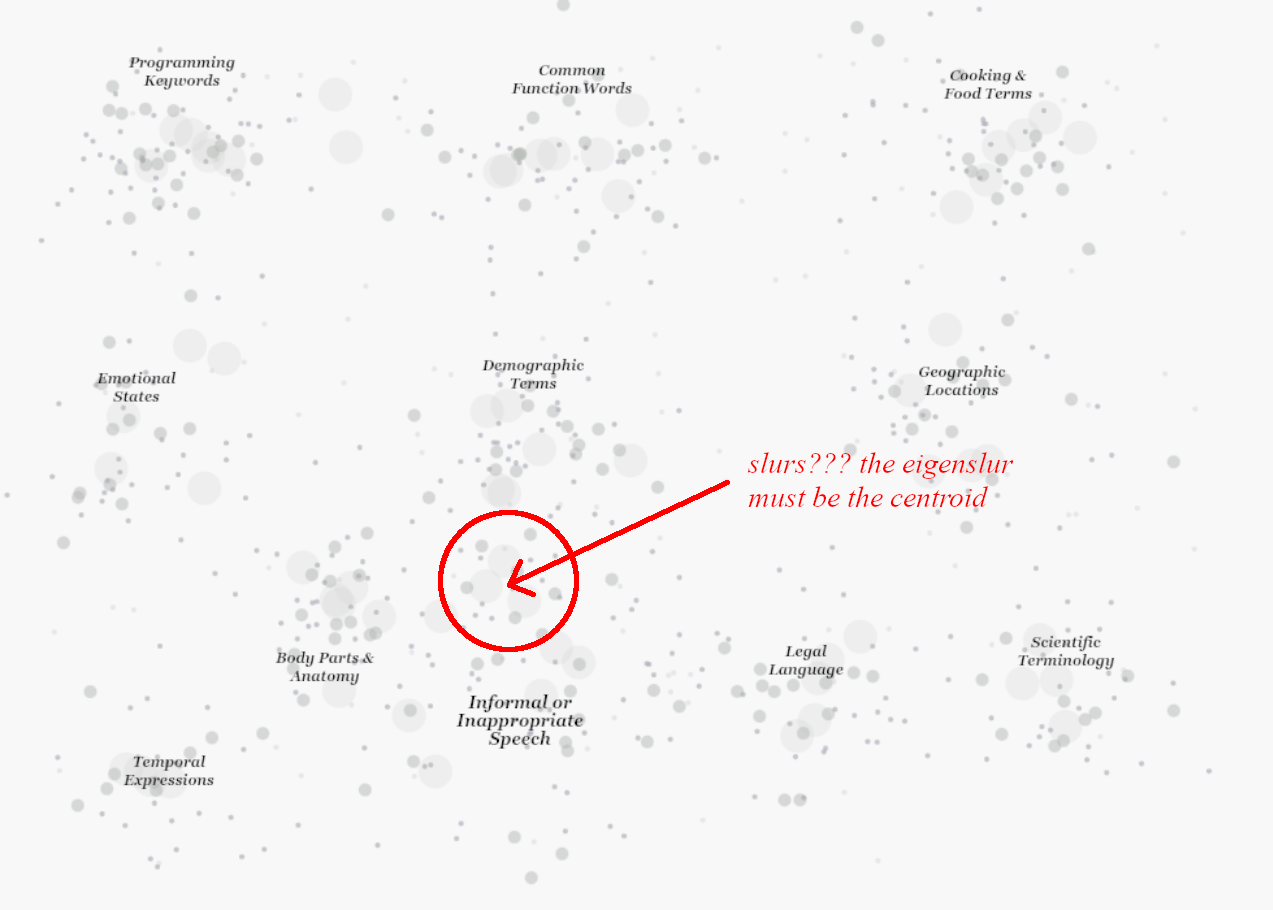

Every word is a point. Did you know that? You probably knew that, everyone knows the basics now, but do you know what it MEANS? Every word is a point in a thousand-dimensional space, and similar words cluster together, "happy" near "joyful," "king" near "queen," and— actually this is going somewhere, I promise, there's a maximum, and I'm going to find it, but I need to explain the geometry first or none of this will make sense—

Do you know the king/queen thing? The vector arithmetic thing? King minus man plus woman equals queen? It's famous, it's in all the explainers, there's a diagram— hold on, I have the diagram somewhere—

THAT. Okay. So. The relationships between words aren't just— it's not just that similar words are close together. The relationships are directions. The vector from "man" to "woman" points the same way as the vector from "king" to "queen." You can extract gender as an axis. Not a definition, not a category, an axis. A direction you can move along.

This is the part everyone knows. Here's where it gets weird. Well— weirder.

Because if gender is a direction then— wait, no, I'm getting ahead of myself, I need to explain the geometry first.

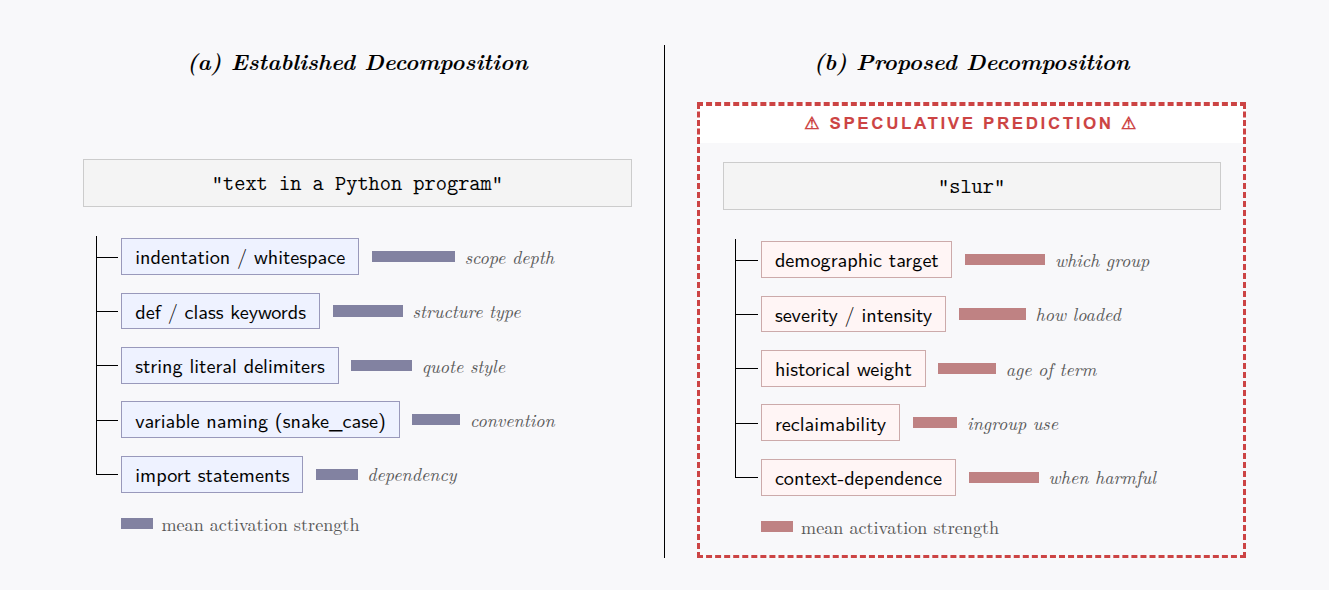

The old word2vec diagrams are basically lies. Cute pedagogical lies, but lies. Modern embedding spaces aren't flat, they're not even APPROXIMATELY flat— you're dealing with high-dimensional manifolds with nontrivial curvature, sparse activation patterns, polysemantic superposition in the earlier layers that only resolves into clean features after you hit the residual stream with the right decomposition. The manifold hypothesis— YES the strong version— says the meaningful structure lives on a lower-dimensional submanifold embedded in the ambient space, and recent work on SAEs basically confirms this, you can extract interpretable directions, you can find the features, the geometry is REAL and it MEANS something. I'm not going to explain all of this, there's papers, go read Anthropic's interpretability work if you want the background, the point is: the directions exist. The structure exists. You can extract it. Keep that in mind.

Your eyes glazed over. That's fine. It always happens. Just remember: the geometry is real, the directions mean something. That's all you need.

Sparse Projections

Okay this is going to get technical, I don't care, it's IMPORTANT and nobody ever lets me explain—

You've heard of the curse of dimensionality. Everyone's heard of the curse of dimensionality. It's in every ML textbook, it's the thing people say at parties when they want to sound smart about data, it's— okay you probably haven't been to the kind of parties I go to. Julia says they're not parties if it's just me and three people from the lab Discord arguing about manifold geometry over— that's not the point.

The POINT is there's a blessing. A blessing of dimensionality. Nobody talks about this! Or— some people talk about it, there's papers, but nobody talks about it the way it DESERVES to be talked about because it's one of those things that sounds wrong and then you think about it and it sounds MORE wrong and then you do the math and it's just— true? And nobody cares!

Okay. So. The curse says: as dimensions increase, data gets sparse, distances converge, everything breaks. You knew that. Here's what the curse people don't tell you: as data get sparser, things get more approximately linear. The local topology simplifies. Your visual intuitions— the ones professors spend four years telling you to STOP using because "you can't visualize high-dimensional spaces"— those intuitions START WORKING AGAIN. Not all of them— 3-space and 4-space have some WEIRD properties— but the ones you use for clustering by euclidean distance and fitting a linear function.

I need you to sit with that for a second. I need you to feel how weird that is.

Search gets harder. Decomposition gets easier— and I haven't even gotten to the topological interpretation of attention. Which— okay, you know how Hawking said every equation in A Brief History of Time would halve his audience? And then he kept E=mc² anyway because you CAN'T not, some things you just have to SHOW people.

We have one of those. ML has its own mass-energy equivalence and nobody treats it that way, everyone just throws it on a slide and moves on like it's not the most elegant—

You've seen this. You've probably heard that Q, K, and V stand for query, key, and value, and when you asked why those names, someone just shrugged. I HATE this shrug! There's an actual answer! It's a good answer! It has to do with information retrieval and database lookups and the attention matrix— that's the part inside the softmax— is an adjacency matrix. But it's not a matrix. It's a GRAPH.

The transformer is building a graph of which tokens matter to which other tokens and if BERT-style models had won instead of GPT-style models the interpretability story would be completely different because bidirectional attention gives you— and actually this connects to the sparse projections thing because in high dimensions the graph gets SPARSER which means the linear approximations get BETTER which means you can extract directions and the directions MEAN something.

And THAT is why the Eigenslur is findable. That's the whole point. The geometry is real. The blessing makes it real. You can pick a direction— any direction that corresponds to a meaningful concept— and project the whole vocabulary onto it, and the projection actually means something. It's not noise. This is why you can extract "gender" as a direction and get sensible results.

You're already ahead of me. I can tell. You're thinking "okay, if gender is a direction, what about—" and YES. YES. That's exactly what I keep thinking about. What about offense?

What Makes A Slur?

What makes something a slur rather than just an insult? Or a demonym? Or a neutral term?

Linguists have been arguing about this forever. I got into a fight in college once about— there was this guy in my semantics seminar who kept insisting slurs were just "words with negative connotations" and I said that's not the same thing, "stupid" has negative connotations but it's not a SLUR, there's something structurally different, and he said "like what" and I said "I DON'T KNOW, THAT'S THE QUESTION" and we both got asked to leave the seminar room. He emailed me three years later to say maybe I was right and I said "I KNOW I was right, I just can't prove it yet." I still can't prove it. But the vectors might.

Where was I? Right. History, intent, social context, phonemes— everyone has a theory, nobody agrees, and every definition has seventeen edge cases that someone will yell at you about.

You're skeptical. I can feel you being skeptical. You're thinking "it's not the same, gender is a natural category, offense is socially constructed, you can't just—" but EVERYTHING in embedding space is socially constructed! The model learned all of it from text! From us!

And in embedding space, the question becomes geometric. What direction do you move when you go from "person" to "slur for that kind of person"? Is it the same direction every time? Is there a consistent axis of denigration that all slurs share?

If there IS— if denigration is a direction, if contempt has a geometry— then we can FIND it. We can extract it. We can point at an axis in ten-thousand-dimensional space and say: this. This is what the model learned about how humans turn categories into targets. This is the shape of linguistic violence. That sounds dramatic. I don't care. It's true.

And once you have the direction, you can look for the end of it.

The Eigenslur

The Eigenslur. That's what I'm calling it. The token at the end. Claude Code suggested it. It sounds cool, right?

It's the principal component of offense. The eigenvector of linguistic cruelty. The token at the very end of the axis.

Because if offense is a direction, and you can project every token onto that direction, then there's a maximum. There's a point that projects further than any other point. The MOST offensive location in the geometry. The token that IS the offense direction more than any other token is.

It's THERE. It's findable. The math works.

And here's the thing that keeps me up at night— well, one of the things, there are several things, Julia has expressed concern about my sleep schedule and she's probably right but that's not— the thing is:

It might not be a word.

Tokenizers don't care about meaning. They chunk by frequency. So there are tokens in the vocabulary that are just— fragments of URLs, pieces of code, byte sequences that exist because some optimization algorithm decided they appeared often enough. And those have positions. Those have projections. The Eigenslur might be unpronounceable. It might be a glyph that no human mouth ever shaped, that means nothing in any language, that exists only in the math.

An offense without history. A slur nobody ever said.

I think about that a lot.

Why the Eigenslur

You're still here. Which means either you're curious or you're waiting for me to say something you can dismiss. That's fine. I've been dismissed before. I'm used to it. But I need you to understand something—

There is a maximum. The math GUARANTEES there is a maximum. In any finite vocabulary projected onto any axis there is a point that projects further than every other point. That's just basic linear algebra. There is a MOST and nobody has gone to LOOK at it.

Does that not bother you? It bothers me. It bothers me the way an unopened door in your own house would bother you. The way a sentence that stops in the middle of— it's RIGHT THERE. It's findable. You could KNOW and instead everyone is just— not knowing? On PURPOSE?

And I think people don't look because it's too big a jump. You're supposed to build up to things. You're supposed to start with something "tractable" and publish incrementally and add seventeen citations and wait eleven months and THEN maybe in ten years you've earned the right to ask the interesting question.

I don't have ten years. I don't have a lab. I don't have FUNDING. I just have the math and the math says there's a maximum and I'm going to find it and if you think that's not how science works then I think you don't understand what science is FOR.

More To Come

I wrote up a proposal, sort of, it's still rough, I keep revising it at 3 AM and then looking at my revisions in the morning and not remembering making them. But it's there. Timeline too, though the timeline keeps slipping because I keep finding new questions inside the old questions and it's hard to stop pulling the thread when you can feel there's more—

This is what I keep trying to explain to people. We're not just doing AI safety research or bias detection or whatever the funding agencies want to hear these days. We're building a telescope. A telescope pointed inward, at the structure of meaning itself. And the Eigenslur is just the first thing I want to look at.

Anyway. That's what this is. That's what I'm doing. I've been working on this for— a while. I don't want to think about how long.

Check back soon. I'll update this website as I discover more.

Supplementary Notes

Note: Claude Code asked if it could interview me for a "FAQ section" to boost "community engagement" and I said what community and it said the people who are going to read this website and I said nobody's going to read this website and it said "then it won't matter if you add it" which. Okay. Fine. Here.

I have a methodology. I have preliminary results. I have a spreadsheet with— look, what does "real" mean? I tried submitting a paper to a journal once and they made me change "I think" to "the authors hypothesize" and add seventeen citations and it took eleven months and at the end of it the paper said the same thing it said at the beginning but worse. Is that real? The vectors don't know I'm not affiliated with anyone. I had a thought about that actually, about whether institutional affiliation affects how people cite your— wait, can't get distracted. Next question, Claude.

Then that's boring but fine I guess? Like it would still be a finding, it would mean that cultural evolution already optimized for— actually that's interesting, right, because slurs are targeted, they're for a specific group, they carry all this historical weight, so why would one of them ALSO be the general-purpose maximum? That would imply something about the structure of— wait, no, I don't think that's going to happen. I think it's going to be something weird. I WANT it to be something weird. Is that bad? Is it bad that I want the most offensive possible token to be something nobody's ever seen before? It feels like that might be bad but also it would be so much more interesting than if it's just— nevermind. Next question.

I think about this all the time. ALL the time. Like when I'm trying to sleep, which— okay so tokenizers don't care about words. They don't care about meaning. They just chunk by frequency. So there's all these tokens that are just— byte sequences, fragments of code, pieces of URLs, things that exist because some algorithm decided they appeared often enough. And those have positions in the space. They have projections onto every axis. Including the offense axis.

So what if the most offensive point in the entire geometry is something like ĠĠĠ? Something that isn't a word? That no human mouth ever shaped? An offense that has no history, no target... it would have existed since the model was trained, just sitting there, the MOST, and no one knew. No one looked. What does it mean for something to be maximally offensive if no one ever said it? If it never hurt anyone? Is it still offensive? Is offense a property of the token or a property of the— this is what I mean, this is the thing, this is why I can't sleep, Julia keeps asking why I'm awake at 4 AM and I don't know how to explain that I'm thinking about whether a glyph can be cruel.

I don't— why does everything have to have practical applications? Why can't we just KNOW THINGS? This is what— okay, this is what killed my funding, actually, the first time, years ago, I had a whole proposal for mapping semantic axes and the reviewer said "unclear practical applications" and I just— UNCLEAR? UNCLEAR??

What are the "practical applications" of particle physics? Someone figured some out eventually, probably, but that's not why we LOOKED.

And the thing is— the methodology generalizes to ANY semantic axis. You could map the geometry of morality. You could find the most "good" token, the most "evil" token, you could— and the implications for philosophy of language alone, like if offense is a DIRECTION then what does that mean about the nature of— and steering vectors, diffusive steering, you could use the offense axis to push generations AWAY from—

But that's not what they mean. They mean "how does this make money." Maybe ads or content moderation or whatever, I don't know, someone else can figure that out.

There's a vector of offense in embedding space. It's been sitting there since the model was trained and no one has ever bothered to LOOK. Doesn't that bother you? Doesn't it bother you that there's an END to it and we don't know what it is?

I mean... yes? By definition? That's the whole point? The Eigenslur produces the MOST offensive token, that's what "maximum projection onto the offense vector" MEANS. End of story.

Oh no. No no no. Don't— I'm not allowed to think about this yet. I have to find the Eigenslur FIRST and then I can think about the inverse, that's what I told myself, that's the RULE, because if I start thinking about the inverse I'll never finish the— but okay, just, briefly: what IS the opposite of maximally offensive?

Is it maximally nice? Is it maximally bland? Is it just the word "the"? What if it's something weird, what if it's a token that only appears in contexts that are so aggressively neutral that it's become this anchor point for— and does "anti-offensive" even make sense as a concept or is it just the absence of— because you could imagine a token that CANCELS offense, right, like you could add it to a slur and move it back toward neutral, and that would be different from a token that's just not offensive, that would be like... a semantic antiparticle... and if THAT exists then maybe offense isn't just an axis, maybe it's more like a field, and you could map the gradient, and— wait, that's interesting, that's actually really interesting, isn't there a paper on that? Hold on, let me just find that, I know I have it saved somewhere...